🧊 Nemotron-Cascade-2-30B-A3B · HLWQ Q5 (Mamba-aware)

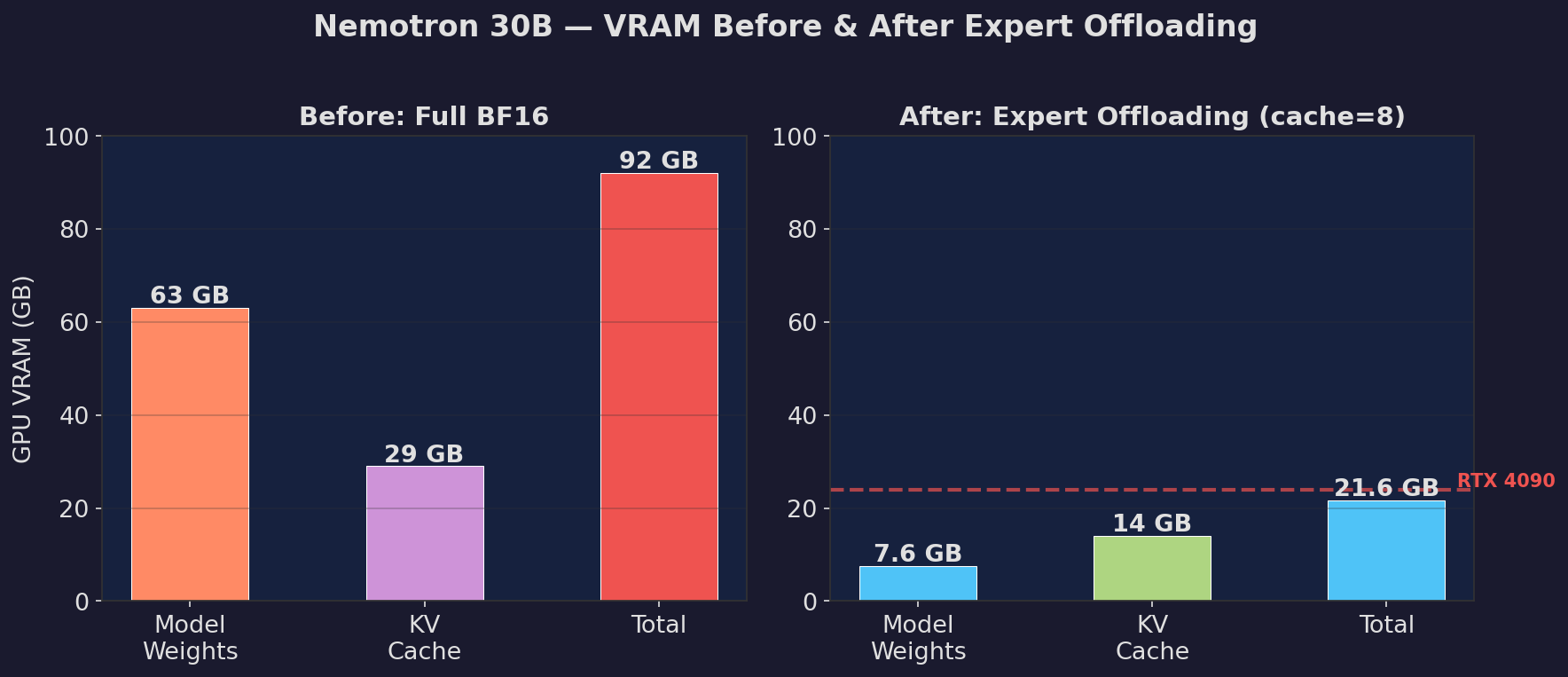

30B hybrid Mamba + MoE model running at 7.6 GB VRAM, 15 tok/s, correct output on RTX 4090.

🎯 Benchmark Results

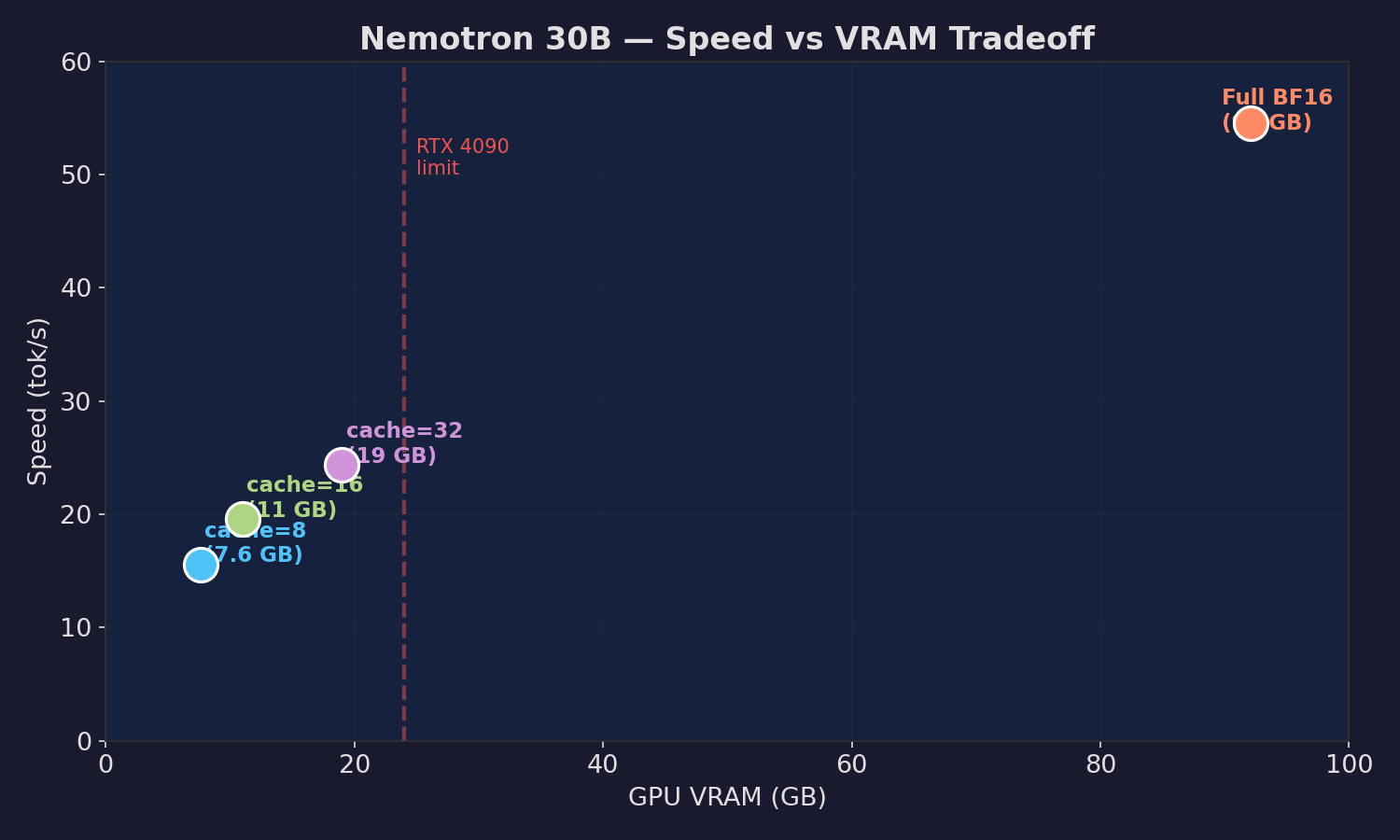

| Config | tok/s | Model VRAM | Quality |

|---|---|---|---|

| Full BF16 (baseline) | 54.5 | 92 GB | Perfect |

| Expert cache=8 (LFRU) | 16.4 | 7.6 GB | Perfect |

| Expert cache=8 (LRU) | 14.6-16.9 | 7.6 GB | Perfect |

| Expert cache=16 | 19.6 | 11 GB | Perfect |

| Expert cache=32 | 24.4 | 19 GB | Perfect |

🚀 Quick Start — BF16 base + expert offload fork (recommended)

The fastest and most reliable path uses the BF16 base model with the vLLM expert offload fork. The MoE experts live in CPU pinned memory with an LFRU cache on GPU.

Requirements:

- GPU: 24+ GB VRAM (RTX 3090/4090 or better)

- CPU RAM: 64 GB (expert weights stored in pinned memory)

- CUDA: 12.0+

- Python: 3.10+

# The vLLM expert offload PR #37190 is OPEN (not yet merged).

# Install the working fork until it lands in mainline:

pip install git+https://github.com/caiovicentino/vllm-expert-offload.git

from vllm import LLM, SamplingParams

llm = LLM(

model='nvidia/Nemotron-Cascade-2-30B-A3B',

trust_remote_code=True,

dtype='bfloat16',

max_model_len=4096,

enforce_eager=True,

moe_expert_cache_size=8, # LFRU cache of 8 hot experts per layer

kernel_config={'moe_backend': 'triton'},

gpu_memory_utilization=0.95,

)

out = llm.generate(['What is 2+3?'], SamplingParams(max_tokens=200))

print(out[0].outputs[0].text)

Cache size guide

| Cache | Model VRAM | Speed | Target GPU |

|---|---|---|---|

| 8 | ~7.6 GB | ~16 tok/s | RTX 4090 (24 GB) |

| 16 | ~11 GB | ~20 tok/s | RTX 4090 (24 GB) |

| 32 | ~19 GB | ~25 tok/s | RTX 4090 (24 GB) |

| 64 | ~34 GB | ~35 tok/s | A6000 (48 GB) |

📦 What this repo contains

This repo is the HLWQ Q5 quantized version of Nemotron-Cascade-2-30B-A3B:

- 20.6 GB total (vs ~60 GB BF16 = 2.9× smaller)

- polarengine_v5 format (Mamba-aware HLWQ with per-layer codes + Lloyd-Max centroids + block norms)

- 6,006 layers quantized across 52 transformer blocks + Mamba mixer layers

- 18,232 weight tensors (including

codes/ct/normstriplets for quantized Linear + Mamba in/out projections)

🔬 Alternative: dequant + serve on A100/H100

For GPUs with 64+ GB VRAM where expert offload is unnecessary, you can dequantize the HLWQ codes to BF16 and serve them directly:

# Dequant HLWQ Q5 codes → BF16 safetensors

pip install polarengine-vllm

polarquant-convert caiovicentino1/Nemotron-Cascade-2-30B-A3B-HLWQ-Q5 /tmp/nemotron-bf16

# Then serve with mainline vLLM

vllm serve /tmp/nemotron-bf16 --trust-remote-code --dtype bfloat16

This produces a ~60 GB BF16 checkpoint that fits single A100 80 GB or H100 80 GB.

🔧 Method

HLWQ Q5 = Walsh-Hadamard rotation + Lloyd-Max 5-bit scalar quantization per 128-block, with Mamba-specific adaptations:

- Attention projections (q/k/v/o): HLWQ Q5

- MoE expert weights (128 experts × top-6): HLWQ Q5 per-expert

- Mamba mixer in_proj / out_proj: HLWQ Q5 (Mamba-aware block handling)

- Mamba SSM params (A_log, D, dt_bias, conv1d): BF16 (critical for recurrent state correctness)

- Norms, router gates, embed, lm_head: BF16

- Shared experts: HLWQ Q5

Per-weight compression: ~5 bits + per-block fp16 norm = ~5.125 bits/value. Cosine similarity vs BF16: >0.997 on sampled probes.

🏷️ Note on naming

HLWQ replaces the author's earlier "PolarQuant" branding to disambiguate from Han et al. 2025 (arXiv:2502.02617), a distinct KV-cache quantization method published under the same name.

- HLWQ (this work): weight quantization via deterministic Walsh-Hadamard rotation + Lloyd-Max scalar codebook

- Han et al. 2025: KV cache quantization via random polar rotation

The two techniques address different components (weights vs KV cache) and are unrelated.

Internally, the quant_method field in config.json remains "polarengine" — that is the wire-format string recognized by transformers and vLLM loaders. Brand is HLWQ; wire format is polarengine.

📖 Citation

@misc{vicentino2026hlwq,

title = {Hadamard-Lloyd Weight Quantization (HLWQ): Near-Lossless 5-bit PTQ for LLMs via Walsh-Hadamard Rotation and Lloyd-Max Scalar Quantization},

author = {Vicentino, Caio},

year = {2026},

eprint = {2603.29078},

archivePrefix = {arXiv},

note = {Formerly titled "PolarQuant"; v2 retitle pending to avoid collision with Han et al. 2025.}

}

🔗 References

- Paper (HLWQ): arXiv:2603.29078

- Code (HLWQ): github.com/caiovicentino/eoq-quantization

- vLLM expert offload fork (PR #37190 is open): github.com/caiovicentino/vllm-expert-offload

- Base model:

nvidia/Nemotron-Cascade-2-30B-A3B— 30B hybrid Mamba + MoE - Related (distinct method): Han, Kacham, Karbasi, Mirrokni, Zandieh. "PolarQuant: Quantizing KV caches with polar transformation." arXiv:2502.02617, 2025.

🙏 Acknowledgements

Built on NVIDIA's Nemotron-Cascade-2-30B-A3B hybrid Mamba + MoE architecture. Thanks to the vLLM team for the expert offload review (PR #37190 in progress) and to Elnur Abdullaev (e1n00r) for driving the upstream implementation.

- Downloads last month

- 2,545

Model tree for caiovicentino1/Nemotron-Cascade-2-30B-A3B-HLWQ-Q5

Base model

nvidia/Nemotron-Cascade-2-30B-A3B